Scaling one Instagram account is operationally simple. Scaling twenty, fifty, or one hundred simultaneously requires infrastructure. When agencies enter the realm of multi-account Instagram automation, growth stops being a tactical exercise and becomes an architectural challenge.

Most automation strategies fail not because they are ineffective, but because they are fragile. They are built around temporary loopholes, static limits, or short-term tactics. When Instagram rolls out an algorithm update, these systems collapse. Accounts face restrictions. Deliverability drops. Messaging limits tighten.

Agencies that survive and scale long term approach automation differently. They design behavior-driven, infrastructure-stable systems that align with platform logic rather than exploit its gaps. This article explores the architecture required to sustain multi-account Instagram automation in an environment where algorithms constantly evolve.

Why Most Multi-Account Automation Breaks After Algorithm Updates

Most multi-account Instagram automation systems do not fail because they are poorly engineered. They fail because they are optimized for the present, not designed for the future. When Instagram introduces an algorithm update, it rarely announces a new rule. Instead, it refines pattern recognition, tightens correlation thresholds, and improves behavioral clustering models. Fragile automation architectures cannot absorb these refinements.

At scale, Instagram does not evaluate accounts independently. It analyzes networks of behavior. When agencies manage dozens of profiles using similar workflows, synchronized timing, identical engagement ratios, and uniform messaging flows, they unintentionally create what detection systems interpret as coordinated automation ecosystems.

Algorithm updates frequently strengthen three areas: cross-account correlation, behavioral anomaly detection, and session-level consistency checks. Automation stacks built on standardized playbooks become vulnerable overnight. What previously appeared as safe daily limits suddenly triggers friction because correlation sensitivity has increased.

One of the most common structural weaknesses is behavioral uniformity. Agencies deploy identical posting cadences, identical DM pacing, identical follow/unfollow cycles, and identical engagement thresholds across every account. From an operational perspective, this maximizes efficiency. From an algorithmic perspective, it creates pattern density.

When Instagram updates its clustering models, it begins comparing accounts not only to organic baselines, but to each other. If fifty accounts initiate conversations within similar time windows, use similar linguistic patterns, and escalate interactions in similar ways, the system recognizes this as systemic automation rather than independent user behavior.

Another fragile layer is static limit thinking. Many automation systems are built around assumed safe numbers: a certain number of DMs per day, a certain engagement ratio, a certain follow threshold. Algorithm updates do not change visible limits; they adjust contextual evaluation. An action that was acceptable within a trusted behavioral history may become suspicious within a uniform, high-correlation environment.

Infrastructure shortcuts compound this vulnerability. When multiple accounts operate from similar device fingerprints, unstable environments, or overlapping session behaviors, detection becomes easier. Updates that strengthen device-level analysis disproportionately impact networks built on shared technical footprints.

Scripted messaging is another breaking point. Even well-crafted templates become liabilities at scale. As Instagram improves natural language similarity detection, repeated phrasing across accounts becomes easier to cluster. After an update, linguistic repetition that previously went unnoticed can trigger Instagram DM restrictions, message filtering, or shadow suppression.

The underlying issue is architectural rigidity. Fragile systems assume that the algorithm will remain static. Resilient systems assume that detection will improve. Agencies that design automation purely around current loopholes inevitably encounter enforcement when those loopholes close.

Algorithm updates do not create new risks. They expose existing weaknesses.

Sustainable multi-account Instagram automation architecture accounts for this reality. It prioritizes behavioral diversity over uniformity. It embeds timing variance across accounts. It decentralizes conversational patterns. It maintains infrastructure isolation. Most importantly, it aligns activity with authentic user behavior rather than platform blind spots.

When systems are built around natural interaction models instead of exploitative patterns, updates become less disruptive. Behavioral realism remains valid even as detection evolves. Correlation analysis loses power when diversity is intentional.

Ultimately, multi-account automation breaks after algorithm updates because it was engineered for efficiency, not resilience. Agencies that design for adaptability instead of optimization create systems that absorb change rather than collapse under it.

Infrastructure Isolation and Device-Level Stability

In multi-account environments, behavior alone is not enough. Even the most carefully diversified engagement strategy can fail if the underlying technical foundation is unstable. This is where infrastructure isolation and device-level stability become decisive factors in sustainable multi-account Instagram automation.

Instagram does not analyze actions purely at the surface level. It evaluates accounts through a complex combination of device fingerprints, session consistency, IP behavior, login patterns, and environmental continuity. When multiple accounts share technical signals too closely, correlation risk increases dramatically, regardless of how human their messaging or engagement appears.

One of the most common architectural weaknesses in fragile automation setups is shared infrastructure. Accounts operate from the same virtual environment, rotate through unstable sessions, or log in and out across fluctuating device contexts. While this may appear operationally convenient, it creates technical clustering. Algorithm updates that strengthen device-level detection immediately expose these overlaps.

Isolation is not about hiding activity. It is about maintaining clean behavioral boundaries between accounts. Each profile should exist within a controlled, persistent environment that mirrors real user conditions. Sessions should be stable over time. Logins should occur predictably. Device signals should remain consistent instead of shifting erratically.

Session continuity is particularly important. Real users do not appear from new devices daily. They maintain consistent usage patterns. When accounts repeatedly trigger fresh device signals or irregular session resets, Instagram’s systems interpret this as elevated risk. Updates that enhance anomaly detection often target these inconsistencies first.

Infrastructure isolation also protects against cascade failures. In poorly designed automation networks, a single flagged account can expose technical links to others. Shared environments amplify enforcement. Proper isolation contains friction. If one account encounters verification challenges or temporary restrictions, the rest of the system remains insulated.

Device-level stability becomes even more critical after algorithm updates. As Instagram refines its detection models, emphasis frequently shifts toward technical integrity signals. Messaging behavior may appear compliant, but unstable infrastructure can override behavioral safety. Agencies that neglect this layer often misdiagnose restrictions as content issues when the root cause lies in environmental inconsistency.

Resilient automation architecture treats infrastructure as long-term capital. Devices, sessions, and account environments are maintained deliberately. Behavioral activity is layered on top of technical stability rather than compensating for its absence.

Importantly, infrastructure stability also enhances performance. Accounts that demonstrate consistent session history and predictable device patterns accumulate trust gradually. This trust influences deliverability, limit flexibility, and friction thresholds in subtle ways. Over time, stable infrastructure becomes an invisible growth accelerator.

In scalable Instagram automation systems, infrastructure isolation is not an optional enhancement. It is the foundation upon which behavioral diversity and conversational realism can operate safely. Without it, even sophisticated strategies remain vulnerable to technical correlation.

Ultimately, algorithm updates do not create risk for stable systems. They reveal instability in fragile ones. Agencies that invest in device-level integrity and environmental consistency build automation architecture that withstands refinement rather than collapses under scrutiny.

In multi-account ecosystems, growth depends not only on what accounts do, but on where and how they exist.

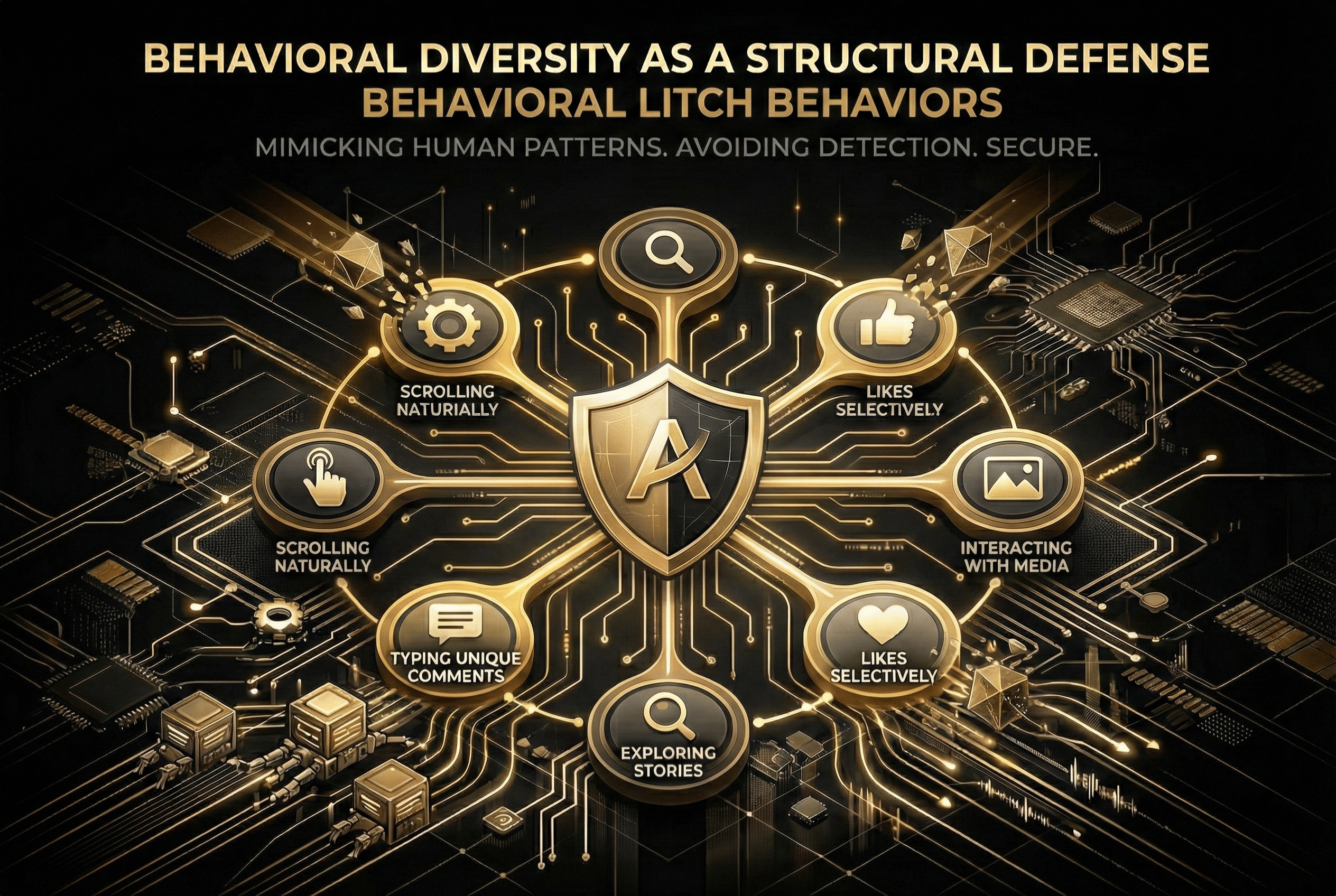

Behavioral Diversity as a Structural Defense

If infrastructure isolation protects accounts at the technical layer, behavioral diversity protects them at the algorithmic layer. In scalable multi-account Instagram automation, diversity is not a cosmetic choice. It is a structural defense mechanism against correlation detection.

Instagram’s detection systems do not look only for “automation.” They look for behavioral similarity at scale. When multiple accounts engage, message, follow, and post in similar ways, patterns emerge. Even if each action individually appears human, collective uniformity exposes coordination.

This is where many automation strategies collapse. Agencies optimize workflows for efficiency. They standardize engagement ratios, synchronize posting windows, mirror messaging cadence, and replicate growth sequences across every account. Operationally, this is clean. Algorithmically, it is dangerous.

Real users are inconsistent by nature. They log in at different times. They engage unevenly. They escalate conversations differently. Some are passive observers. Others are highly interactive. This natural variance creates behavioral noise that protects authenticity. Multi-account automation must intentionally replicate this variance.

Behavioral diversity begins with timing dispersion. Accounts should not operate within identical daily windows. Some may show morning activity peaks. Others may engage late at night. Session lengths should vary. Activity intensity should fluctuate organically rather than follow predictable curves.

Engagement style must also differ subtly between accounts. One profile may favor story interactions. Another may lean toward feed engagement. A third may initiate conversations more frequently but maintain shorter exchanges. These distinctions reduce cross-account correlation risk.

In Instagram DM automation, diversity becomes even more critical. Messaging cadence should not mirror across profiles. Escalation timing should vary. Linguistic expression should shift naturally. Even subtle repetition in phrasing or progression structure can become detectable when multiplied across dozens of accounts.

Algorithm updates often strengthen clustering analysis. When Instagram improves its ability to compare accounts against each other, standardized automation stacks become exposed rapidly. Behavioral diversity acts as insulation. It prevents accounts from forming detectable automation clusters.

Importantly, diversity must be intentional, not random. Randomization without structure creates erratic behavior, which can be equally suspicious. Effective diversity is controlled. It operates within realistic human boundaries while ensuring that no two accounts evolve identically.

Advanced agencies implement what can be described as behavioral segmentation models. Accounts are grouped into archetypes with distinct engagement rhythms, messaging tendencies, and interaction depth profiles. Within each archetype, micro-variations are introduced. Across archetypes, divergence is stronger.

This layered variation creates resilience. When algorithm updates refine detection thresholds, accounts remain insulated because they do not share concentrated behavioral signatures. The system absorbs refinement rather than triggering mass restriction.

Behavioral diversity also improves performance outcomes. Conversations feel less repetitive. Engagement appears more authentic. Audiences encounter variation instead of predictability. This strengthens both platform trust and user perception.

Ultimately, behavioral diversity is not about complexity for its own sake. It is about designing automation that mirrors the unpredictability of real human ecosystems. When accounts behave like independent individuals rather than synchronized assets, algorithm updates lose their disruptive power.

In sustainable multi-account Instagram automation architecture, diversity is not an afterthought. It is the structural layer that allows scale to remain invisible.

Centralized Control With Decentralized Expression

At scale, chaos and rigidity are equally dangerous. Pure decentralization leads to inconsistency, unmanaged risk, and unpredictable enforcement exposure. Total centralization, on the other hand, creates uniform behavior that algorithms can cluster easily. Sustainable multi-account Instagram automation architecture requires a deliberate balance: centralized control paired with decentralized expression.

Centralized control provides structural stability. Agencies need a unified oversight layer that monitors engagement velocity, DM volume, session frequency, deliverability signals, and account health metrics across all profiles. Without this visibility, scaling becomes reactive. Restrictions appear unexpectedly. Messaging limits tighten without early warning. Performance volatility increases.

A centralized system allows agencies to detect anomalies early. Sudden drops in reply depth, unusual friction in message delivery, or shifts in engagement patterns can be identified before they escalate into account-level restrictions. This proactive monitoring is essential in environments where Instagram algorithm updates refine enforcement models continuously.

However, centralized control must not translate into behavioral uniformity. This is where decentralized expression becomes critical. Each account must retain its own behavioral identity profile. Messaging cadence, engagement style, posting rhythm, and conversational tone should differ subtly across accounts. Central systems define boundaries. Individual profiles operate uniquely within those boundaries.

This model mirrors how large organizations function in reality. Corporate governance ensures compliance and risk management, but regional teams adapt communication to local contexts. In the same way, scalable Instagram automation must operate within a shared compliance framework while allowing distributed behavioral variation.

Decentralized expression reduces cross-account correlation risk, which is one of the primary vulnerabilities in multi-account growth strategies. When accounts escalate conversations differently, vary session timing organically, and express brand voice with micro-variations, clustering models lose precision. Algorithm updates that refine pattern detection have less impact because behavior is already diversified.

At the same time, centralized control ensures that variation does not drift into instability. Limits are enforced consistently. Velocity adjustments can be applied across the network when platform conditions shift. If Instagram tightens messaging sensitivity, agencies can recalibrate pacing globally without collapsing the system.

This hybrid structure also enhances operational scalability. Teams can manage dozens or hundreds of accounts without micromanaging each one. Governance rules guide activity. Expression remains adaptive. Risk remains contained.

Importantly, centralized oversight enables strategic agility. When algorithm updates occur, agencies with unified monitoring can identify which behavioral dimensions are affected. They can adjust DM pacing, engagement ratios, or session intensity quickly. Decentralized behavior ensures that changes do not produce synchronized reactions that expose automation.

Ultimately, centralized control with decentralized expression transforms automation from a mechanical system into a managed ecosystem. It aligns operational discipline with human variability. It protects accounts at scale without sacrificing authenticity.

In resilient multi-account Instagram automation architecture, control provides safety. Expression provides invisibility. The combination allows growth to continue even as algorithms evolve.

Algorithm updates are inevitable. Enforcement models will continue to evolve. The agencies that thrive in multi-account Instagram automation environments are not those chasing loopholes. They are those building systems aligned with platform fundamentals.

By investing in infrastructure isolation, embedding behavioral diversity, maintaining centralized oversight, and prioritizing conversational realism, agencies create automation architectures that absorb change rather than collapse under it.

Sustainable growth is not about finding static limits. It is about building systems Instagram has no reason to penalize.

In a constantly evolving ecosystem, resilience outperforms speed. Agencies that design for adaptation instead of exploitation ensure that their Instagram automation systems survive algorithm updates and continue scaling long term.